Power, cooling, and the opportunity to rethink where compute happens

Series: Sustainable Reality Reflections — Decisions in Emerging Technologies

Modern computing infrastructure has been built on a powerful assumption.

If demand increases, we scale. More compute, more storage, more capability. Systems expand to meet the needs placed on them.

For years, that model has worked. At SR25, Mark Bjornsgaard outlined why that assumption is now under pressure. Not because demand is slowing, but because the physical limits of computing are becoming harder to ignore.

The problem is not demand

The challenge facing modern infrastructure is not a lack of ambition. It is the cost of supporting it. AI workloads, accelerator-heavy systems, and increasingly dense compute environments are driving a sharp increase in power consumption. As systems scale, the energy required to operate them rises significantly.

At the same time, that energy must be managed. Heat becomes a constraint. Cooling systems must handle concentrated thermal loads. Facilities must be designed not just to house compute, but to sustain it.

This introduces a different kind of limit. It is no longer enough to ask how much compute can be deployed. The question becomes whether it can be powered and cooled effectively.

The limits of centralisation

Much of today’s infrastructure is built around centralised data centres. This model has clear advantages. It simplifies management, concentrates expertise, and allows for economies of scale. But it also concentrates power demand and heat generation.

As workloads grow, these centralised systems become increasingly difficult to scale. Power delivery becomes a bottleneck. Cooling systems are pushed to their limits. Expanding capacity may require significant changes to physical infrastructure. In this context, simply building larger data centres is not always the most effective path forward.

From constraint to opportunity

Mark’s talk did not present these challenges as a dead end. Instead, it reframed them as an opportunity to rethink how compute is deployed.

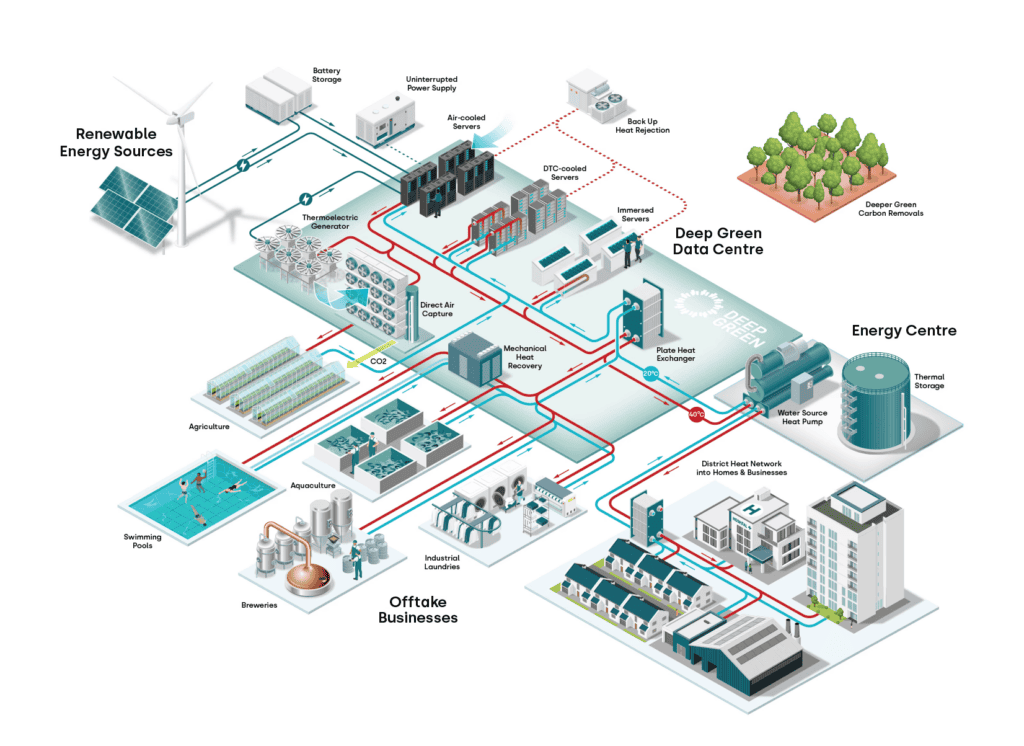

If centralised systems concentrate constraints, one response is to distribute them. Rather than scaling only through larger, denser facilities, infrastructure can be designed to operate across multiple locations. Compute can be placed closer to where power is available, where cooling is more efficient, or where workloads are generated.

This is not a new idea, but the current pressures on energy and cooling make it more relevant. Distributed and edge computing models allow organisations to:

- reduce pressure on single facilities

- take advantage of local energy availability

- align compute with physical and environmental conditions

- build more flexible and resilient systems

The constraint becomes a design input.

Trade-offs still apply

This shift does not remove complexity. It changes it. Distributed systems introduce new challenges. Coordination becomes harder. Latency must be managed. Data movement becomes more significant. Operational oversight extends across multiple environments.

The trade-offs remain.

- Do you centralise for simplicity or distribute for efficiency?

- Do you optimise for performance or for energy availability?

- Do you concentrate expertise or extend capability across locations?

What changes is the context in which these decisions are made. Energy and cooling are no longer secondary considerations. They sit alongside performance and cost as primary drivers of system design.

Designing for a different future

The broader implication of Mark’s talk is that the future of computing will not be defined solely by faster hardware or larger systems. It will be shaped by how we respond to physical constraints.

Distributed computing, edge environments, and alternative approaches to infrastructure design are not just technical trends. They are responses to the realities of power and cooling. In that sense, the current moment is not only a challenge. It is a transition. The systems we build next may look different, not because we want them to, but because they must.

Decisions that reshape infrastructure

The key insight is simple. Constraints do not only limit what we can build. They influence how we think about building it. As power and cooling become central concerns, infrastructure decisions must account for more than just performance. They must consider where compute runs, how it is distributed, and how it interacts with the physical world.

Scaling is still possible. But it may no longer mean building bigger systems in one place. It may mean building differently.

Want to get the complete presentation? Head to our speakers channel, or check out this highlight clip:

This article is part of the SR25 series examining how researchers and engineers make decisions in rapidly evolving technological fields.